Applications

Part of the Oxford Instruments Group

Part of the Oxford Instruments Group

Expand

Collapse

Discover how the unison between classical and quantum computing will help shape the future of scientific discovery with less wiring and higher qubit counts.

Dr. Matthew Hutchings CPO, Co-founder of Seeqc, caught up with Oxford Instruments NanoScience’s Director of Engineering, Matt Martin, earlier this month to discuss Seeqc’s mission, the vital relationship between classical and quantum computing, short- and long-term goals in this space and what we can expect to see in the future.

Seeqc spun out from Hypres - a world-leader in commercial superconductive systems - and is known for commercialising the world’s first ultra-fast digital superconducting technology using Rapid Single Flux Quantum (RSFQ) logic. We’re one of the only multi-layer commercial superconductor chip foundries world wide with over $100m in investment, 26 employees and a significant patent portfolio, delivering complete superconductor computing systems.

At Seeqc, we see quantum computing as a global opportunity, and ultimately, work with various global partners and government bodies. We’re headquartered in the US, with important R&D operations in the UK and EU, and we collaborate with academic groups around the world. Roughly a quarter of our team is based in the UK, working on exciting projects with organisations like Oxford Instruments NanoScience.

An example of the current problem we’re facing can be found around drug discovery and drug modelling. Both are becoming increasingly more difficult and we're relying more heavily on lab testing to build and make sure discoveries function as they should. For example, replicating a penicillin molecule on a classical computer would take 10 to the 86 bits of computation, which is a big number. But, you could potentially create 286 perfect qubits by replicating what the 10 to the 86 bit system is trying to do, thus, re-creating a penicillin molecule.

However, building perfect qubits and controlling them is extremely challenging, which is why there has been investigation around it. We know that we could make “noisy qubits” and use larger systems to encode perfect logical qubits, and so, it has been discovered that once you have a large enough number of noisy qubits, you can encode logical or more perfect qubits.

We’re using the power of classical computing to back up the power of quantum computing, creating a hybrid system that is partnered by a powerful classical computer and a powerful quantum computer. If you partner these two inventions well, you can come up with clever ways to map algorithms to do computationally difficult bits on quantum computing, while doing the rest of the application on the classical computer.

With this clever algorithm thinking, we can target some exciting applications in chemistry, material science, machine learning, and finance, to name a few, and do some truly impactful work in the algorithm space. Going back to drug discovery, drugs themselves are larger molecules, meaning they might need a larger computer in order to simulate. However, these massive advancements in algorithm technology where we can take more power by better embedding our quantum computer in classical computing could allow us to bring these kinds of exciting applications even nearer into the future.

There's a huge amount of work being done in this algorithm space. Back in the 1940s, room-scale classic computers had to be operated by people physically plugging in and unplugging switches. It was a mess of cables and the responsibility of operators to protect and control the delicate bits of computation. Today's state-of-the-art quantum computer by Google has a similar analogy with lots of wiring and complexity that still needs delicate control. It took the invention of something called the integrated circuit by Fairchild, and then Intel, to really take this technology forward.

BM has a fantastic roadmap of scaling this type of quantum computer, building a quantum computing fridge in a big trench. However, this is not only a physically challenging job, but a much more brute force scaling approach, and in truth, it would be better to figure out how to make the system itself more scalable. A second approach is to interconnect different fridges and different computers. This idea came from Andreas Wallraff’s Group to connect in a superconducting manner two quantum computing chips over a five-metre distance. However, what they're really doing is taking this concept and making it bigger and more complex, which in our opinion isn’t the answer to achieving a commercially scalable quantum computer.

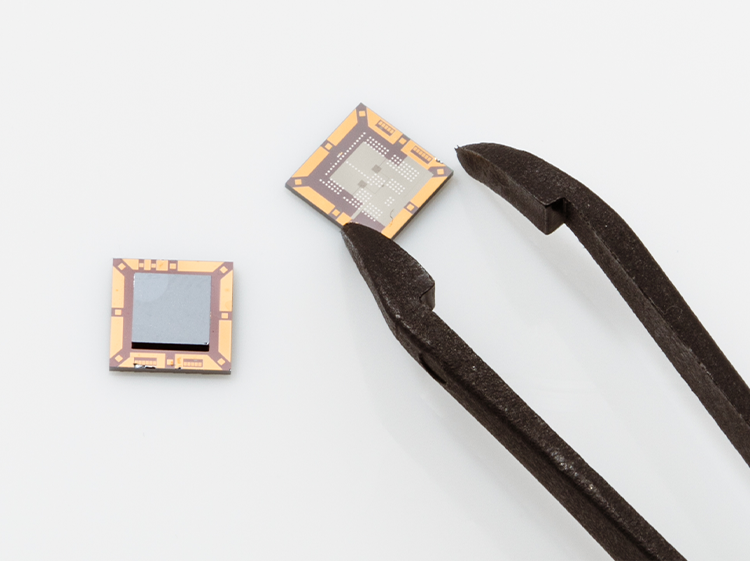

We know that the classical computer didn't get commercialised by making it larger, which is why we rethought the technology around integrated circuits. We're now onto the third generation of our superconducting controller module that digitally does all of the qubit control and readouts, distilled and integrated onto this chip. We then coupled it with qubits using a patented technology that maintains qubit performance, and in doing so, we've created what we deem to be the integrated circuit for the quantum computing era.

Our Digital controller allows us to reduce the system complexity, including the number of wires and systems that plug into this chip (there’s more on this next). This is because digital technology allows us to multiplex systems significantly better than analogue systems. Analogue systems are inherently difficult to stabilise from environmental noise, so, by having a digital interface at the qubits, we can massively reduce the environmental and used error rates to create a more manufacturable and cost-effective system.

We need to make a manufacturable quantum computing system. Superconducting quantum computers operate at gigahertz speeds. In order for our classical logic to keep up with our quantum logic, it needs to run a lot faster. Megahertz of speed, the speed that convention computer architectures operate at when cooled to the extreme temperatures qubits operate at, doesn't cut it, which is why we built superconducting technologies that run at 10 gigahertz, so they can keep the pace.

Going back to the scaling issue of the early classical computing era, the head of Bell Labs at the time coined the phrase “tyranny of numbers”. It means that every time you add a bit, you have to add another wire. Quantum computers today have a similar kind of wiring overhead issue, but Seeqc’s integrated chip allows you to add significantly fewer wires per qubit. We could build a million qubit processor in what Oxford Instruments NanoScience is building in cryogenic support technology today, and this is much more of a technology innovation issue.

We want to get faster speeds, lower latency and reduce power consumption, meaning a much more manufacturable and cost-effective system, too. Customers aren't going to spend hundreds of millions on their own quantum computer, so we need to make a quantum computing system that’s more accessible for everyone.

Visit their website: https://seeqc.com/

Dr. Matthew Hutchings